I could not have timed it better if I had tried. I seemed always to be in the right place at the right time. From the day I took my first “real” job (one with a salary and benefits, not just an hourly wage) in 1967, until the time I hung up my spurs (in 2009), my skill set kept pace with whatever was in demand at the time.

This post contains highlights from the early part of my career in computing and quantitative analysis. I also comment on an article that describes hand-held devices in use before the age of electronics. In a follow-up post, I will describe my move to the Big Time, and comment on another article, about the future of coding, as a sample of how technology will change the way analysis is done.

My career had a heavy technological bent. My first such job had been created by the need for people to implement a conversion then being made from second-generation computers (powered by printed circuit boards) to third-generation machines (introducing integrated circuits, which brought capabilities that had previously not been possible, such as multi-programming — alias timesharing).

[see How I became a computer programmer — today, that position would be described as a software engineer in Information Technology]

I spent several enjoyable years at the forefront of what was then called Data Processing. I worked in a sophisticated environment, learned to code in several computer languages, and became a systems analyst, a trainer, and a project supervisor.

Computers had been used in government and business for many years, tracing their origin back to the Hollerith cards used to tabulate the 1890 U.S. Census. But most people had no direct connection with computers. Desktop computers did not exist, and long-distance communication between computers was unknown. The handheld computers we take for granted today (alias cellphones) were know only in fiction, such as Dick Tracy’s 2-way wrist radio.

I started out by learning COBOL, in my first job as a programmer, in the Data Processing Department of Connecticut General Life Insurance Company (CG). That was the language used to program the newly-purchased RCA Spectra 70. The company had spent $7 million to acquire that machine ($65 million in today’s dollars). The Spectra 70 was the equivalent of the more widely-used IBM System/360.

For that steep price, CG had acquired state-of-the-art capability, boasting all of 32K of main memory (approximately enough to hold a 20-page single-spaced document). It was run by TOS ([magnetic] Tape Operating System). The economic justification was that automation would replace a multitude of clerks who were entering and tracking transactions by hand on 3-by-5 index cards.

The existing computer systems (such as billing and claim processing) were processed on a second-generation RCA 501. That machine took up an enormous amount of space, with its refrigerator-sized cabinets for reading and writing magnetic tape reels. If you saw the original Star Trek TV series that began in 1966, you would recognize the console of the 501, because it was used as the console of the Starship Enterprise.

I also had to learn how to program the 501 because it was necessary to keep the old systems running while their replacements were under development. The 501 was an octal (base 8) machine, meaning that each byte (character) in memory was made up of 3 bits (set to zero or one). The third-generation machines, by contrast, were hexadecimal (base 16) machines (each byte contained 4 bits).

All of this was important only because we had to learn to read computer memory and instructions in their native language. If a program “hung” (could not go any further because of an internal error), the computer operator would print out what was in memory at the time so that the programmer could figure out what went wrong.

The two machines had different methods of inputting our programs. On the Spectra 70, our coding was punched into Hollerith cards. For the 501, our instructions were punched into paper tape. In both cases, we had to be able to hold the input medium up to the light so as to read and understand it the way the computer did.

If a card was bad, a corrected card could be punched to replace the erroneous card. For paper tape, it was a bit more complicated, because the entire program had to be read as one continuous piece of tape. Once the offending instruction was identified by the programmer (usually by reading the memory dump), a corrected one was punched on tape. Then, the challenge was to find the bad instruction by reading the octal characters on the long paper tape that contained the program (there was no printing on the tape). Once the erroneous location was found, a pair of scissors would excise the bad portion of the program. We had a special machine for literally patching the program. The new patch would be put in place and Scotch tape was used to connect it with the existing reel. That new connection was placed into a heated vise that would weld the plastic and paper tapes into a junction that was strong enough to withstand the pressure of being pulled through the high-speed paper-tape reader.

After several years of dealing with insurance applications (including the then-new Medicare payment system), I decided to look for a different challenge.

In 1976, with a new Master’s degree in Economics in my back pocket, I moved into investment analysis. I used my computer skills to analyze stock and bond portfolios, stock options, and complex investment schemes. CG owned a REIT and formed partnerships with property developers. My analysis often had to create results for four sets of books: one for the partnership, one for the insurance regulators, one for the IRS, and (most importantly) one for shareholders.

The computer environment was evolving. I no longer had easy access to CG’s mainframe computers, but remote computing was becoming popular. We had one terminal in the department. It was a teletype machine that operated at 30 CPS (characters per second). This post would take more than six minutes to print out (text only, no graphics).

APL (a programming language) had become popular with the company’s actuarial students, so I had access to an interactive (on-line) version of that. We also used the GE timesharing service that offered the Dartmouth BASIC programming language.

After persuading my boss that I could be much productive if I didn’t have to rely on a slow and shared (often unavailable) terminal, I became a proud user of an early model of IBM’s first desktop computer, the 5520. It had a built-in CRT and a magnetic tape-cassette drive. Its two programming languages were BASIC and APL. CG plunked down about $20,000 (close to $100,000 in today’s dollars) for this precursor to the PC.

That sum was approximately equal to my annual salary at that time. I was about the become an Officer of the company, and trade in my Datsun 240Z for a BMW 320i. I really thought I was the cat’s meow in those heady days.

But I knew I was capable of more. In Part Two of this story, I tell of my decision to move to the Big Time (New York City, and, eventually, Wall Street). For now, I will segue into one of the articles I mentioned.

Around that time (1976), personal electronic devices were replacing mechanical ones. I remember paying $79 (over $300 in today’s dollars) for a plug-in calculator made by Royal.

As you can see, this marvel of a machine could add, subtract, multiply, and divide. Amazing! There are a couple of other functions as well, though my main use was to do those arithmetic operations with large columns of numbers. Notice that there is no way to print the results, nor any way to communicate with another machine. Yet, it was a very useful and timesaving device in its time.

Before my time

In days gone by, similar (and more complex) calculations could be made by various hand-held devices, as described in an article entitled “Instrumental Tricks” in the London Review of Books (5 October 2023). The author points to

… the first calculating devices, generally thought to be abacus variants that represented numbers as physical tokens such as stones placed in columns, which might be … strings arranged in a frame. (The Latin word for ‘count’, calculare, comes from calculus, ‘pebble’.)

These compact computing gadgets were the first “pocket calculators” and were in common use for many hundreds of years (dating back at to at least the fourth century BCE). The next big thing came into existence in the 17th century.

As the name suggests, the appeal of the pocket calculator is all about compression – the smallness of the device itself, but more important its capacity to reduce the time and effort needed to carry out calculations. In this regard, the abacus was a shabby stand-in compared to the device that came next: the slide rule, a marvel of design that compressed mathematical work to the point of invisibility. The individual who deserves most credit for its invention [was] the English clergyman and mathematician William Oughtred (1574-1660) …

Donated by K&E to the Smithsonian:

The Electronic Calculator

In the early 1970s, a pocket calculator sold for hundreds of dollars and still had an air of mystique. ‘Look again,’ reads an article in the New York Times from 1972. ‘The person next to you, apparently holding a tiny radio in his hand, may actually be using a calculator.’ … Just a few years later, calculators were retailing for less than $20

In my work, I witnessed the intersection of those two worlds; the wonder of the slide rule being replaced by the pocket electronic calculator. I had learned how to use the slide rule in high school. It was part of the study of logarithms, and every student had a small plastic slide rule for practice. In the late 1970s I became proficient in calculating with the latest Texas Instruments (TI) pocket calculator (it required a large pocket).

In the Investment Department at CG, I reported to Bob Whalen, and his boss was Al Roby. Neither one of them stood on ceremony, and I often worked directly with Al on projects that required some actuarial expertise. He was a full-fledged Actuary, and was eager to teach me how interpret actuarial tables, and how to use the many formulae of the actuarial trade.

By the time Al had finished tutoring me, I could easily have passed the first of the actuarial exams, but my career interests lay elsewhere. I was also studying to become a CFA (Chartered Financial Analyst), a three-year program that I would complete after I had moved to New York.

During one of our sessions, Al smiled as I struggled to compute some values using my fancy new TI calculator. “Let’s have a contest,” he suggested. I would use my electronic calculator and he would use his slide rule, and we would see who could arrive at the answer first.

While I was still punching the keys on the calculator, he announced his answer. I soon arrived at exactly the same number.

There was another Actuary, Dick Murphy, at CG who had an interest in investment problems. I relied on him to provide me with custom-made solutions to some of the more abstruse relationships I had to deal with. I had once asked him, for example, how to calculate the volume of the right-hand side of a lognormal distribution from any arbitrary point on the curve. He was not aware of any such formula, so he told me he would take the problem home and give it some thought. The next day, he came to me with eight handwritten pages of the derivation he had done.

As I sought more and more challenging problems to solve, my interests turned to finding a job that offered an opportunity to grow.

In Part Two of this story, I will describe how I found such an opportunity, and I will take a look into the crystal ball, to speculate on the future of quantitative analysis.

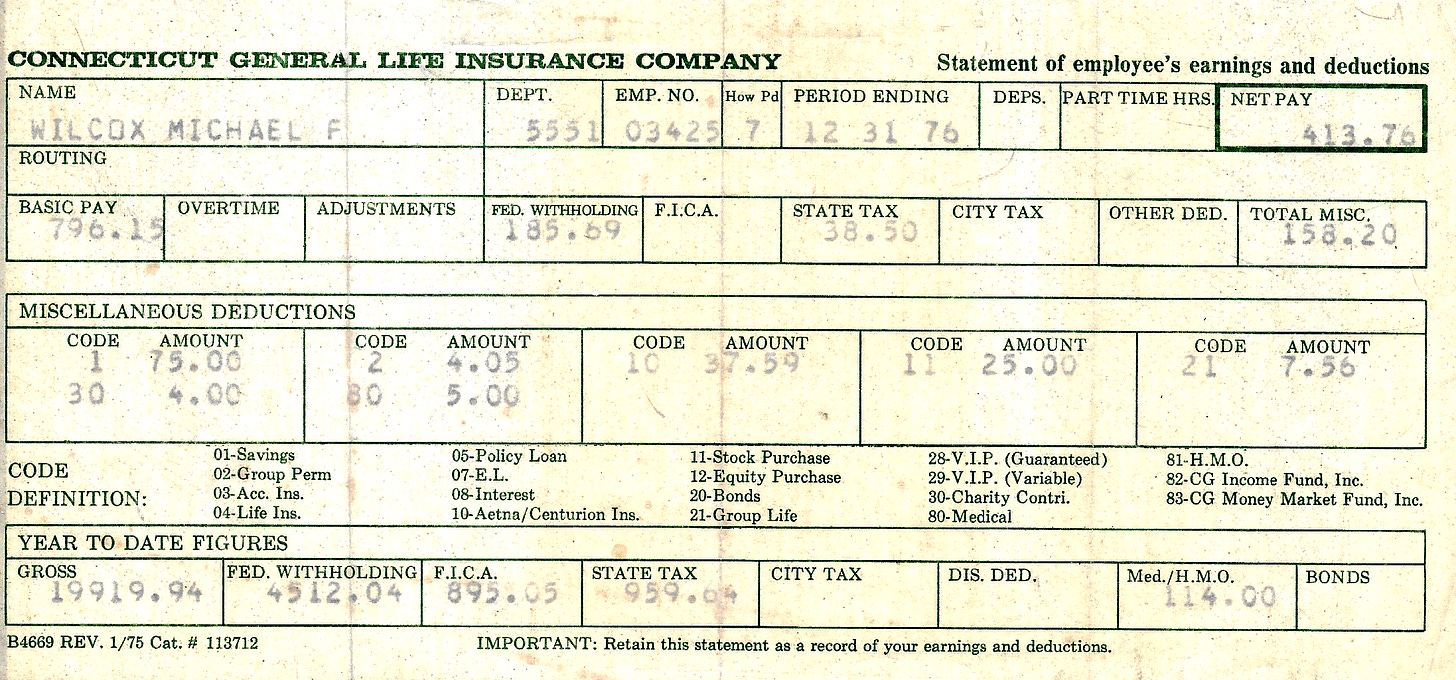

PS my 1976 paystub of $19,919.94 is equivalent in value to 2024 dollars of $110,157.27

MFW - A nice trip down memory lane.

I appreciate that you gave credit to the seminal contributors who advanced your early career. I believe you overlooked another important figure from that time. I speak, of course, of Heidi Berkowitz who inspired us to reach for heights never before attempted.

I remain convinced that the IBM 029 Replicating KeyPunch was the most reliable piece of computer equipment ever constructed. Although it was famously prone to operator error....if only we could eliminate the humans computing could be so much more efficient.

Michael, you never cease to amaze me. Great stuff.